1. Why Retail Replenishment Is a Good ERP Stress Test

ERP demos are beautiful. Real operations are not.

Retail replenishment is one of the first processes where ERP systems stop being “forms and documents” and start being decision engines. It forces the platform to handle:

- Time-series sales data

- Forecast adjustments (seasonality, promotions)

- Supplier constraints (lead time, MOQ, pack size)

- Multi-warehouse logic

- Automatic document generation

- Human approval workflows

- Explainability of system decisions

This makes it an ideal comparison case for ERPNext and MyCompany.

The goal of this article is not marketing. It is to answer a practical CTO-level question:

How much engineering effort does it take to implement a real retail process?

And yes — we will count lines of code.

2. Business Specification (Practical, Not Imaginary)

Scenario

- 15 retail stores

- 1 central warehouse

- 4 main suppliers

- Daily POS sales

- Weekly promotions

- Mixed transfer / purchase strategy

Requirements

Every night (02:00 AM):

For each SKU per store:

- Calculate average daily sales (last 28 days)

- Adjust with a promotion factor (if active)

- Multiply by supplier lead time

- Subtract:

- Current stock

- Incoming purchase orders

- Incoming transfers

- Apply:

- MOQ (minimum order quantity)

- Pack-size rounding

- If there is a deficit:

- Prefer a transfer from the central warehouse (if available)

- Otherwise suggest a Purchase Order

- Create draft documents

- Log an explanation (why this quantity)

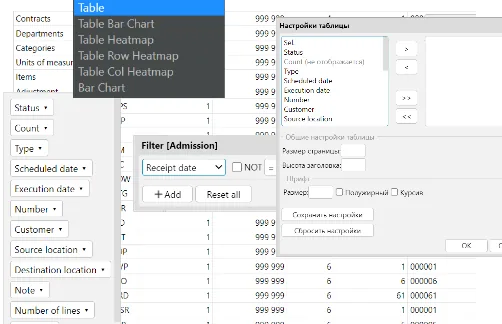

- Provide a UI screen:

- View suggestions

- Approve

- Reject

- Recalculate

Explainability example:

“Calculated need: 94 units (ADS 4.7 × 14 days – 32 stock – 40 incoming). Rounded to 96 due to pack size 12.”

3. ERPNext Implementation

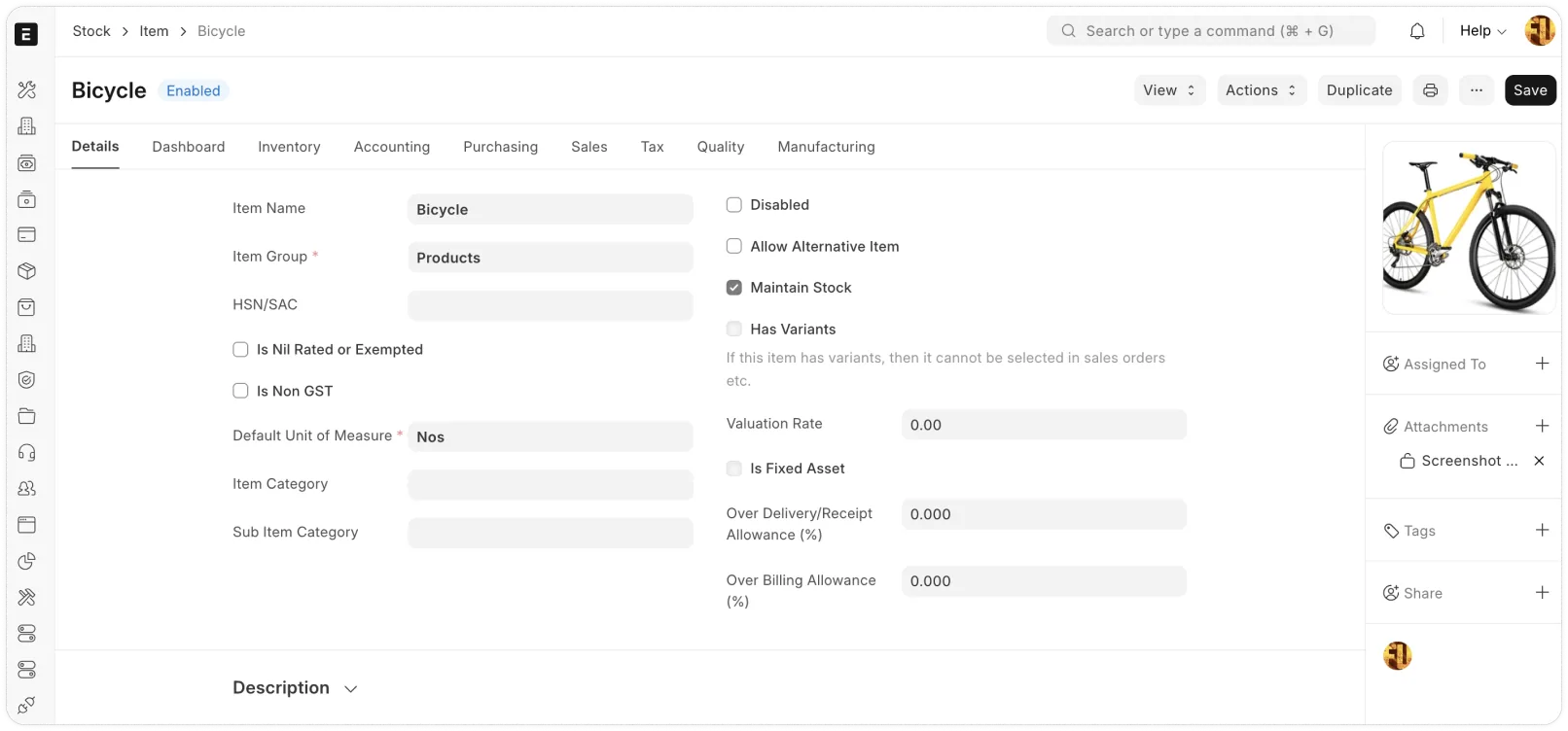

ERPNext is built on the Frappe Framework (Python backend, JavaScript frontend).

It already supports:

- Stock

- Reorder levels

- Material Requests

- Purchase Orders

- Stock Transfers

But once you add promotions, multi-store rules, MOQ/pack rounding, “buy vs transfer” logic, and explainability, you quickly go beyond the built-in reorder functionality.

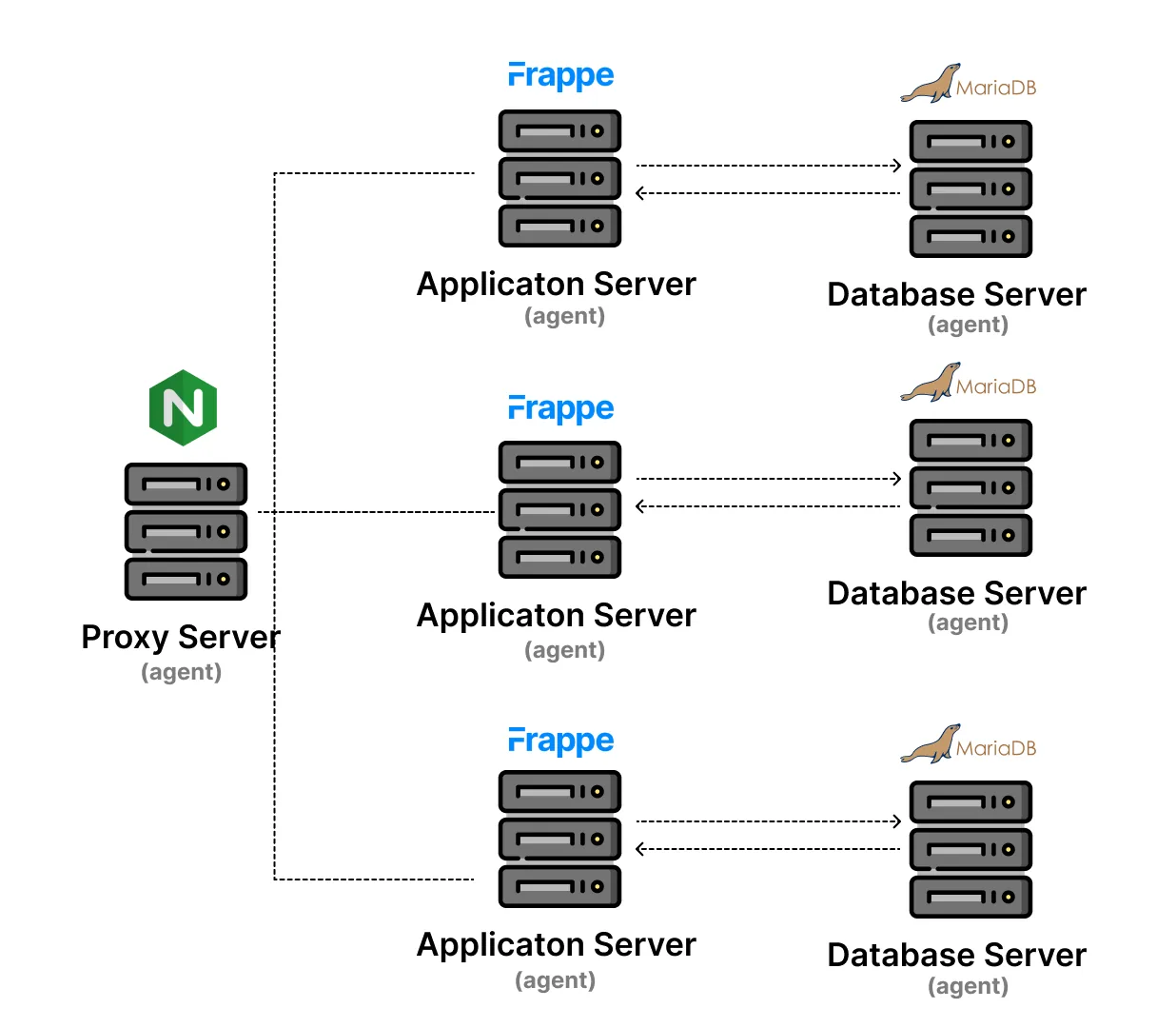

3.1 Architectural Approach

Typical implementation:

- Custom DocType: Replenishment Rule

- Scheduled Python job

- Custom server-side report

- Client-side UI actions

- Hooks for automation and wiring

3.2 Data Model Extension

{

"doctype": "Replenishment Rule",

"fields": [

{ "fieldname": "item", "fieldtype": "Link", "options": "Item" },

{ "fieldname": "warehouse", "fieldtype": "Link", "options": "Warehouse" },

{ "fieldname": "lead_time_days", "fieldtype": "Int" },

{ "fieldname": "promotion_factor", "fieldtype": "Float" },

{ "fieldname": "moq", "fieldtype": "Int" },

{ "fieldname": "pack_size", "fieldtype": "Int" }

]

}Typical footprint: ~120–180 LOC including metadata.

3.3 Scheduled Job (Core Logic)

# app/replenishment/scheduler.py

import frappe

def nightly_replenishment():

rules = frappe.get_all("Replenishment Rule", fields="*")

for rule in rules:

avg_sales = calculate_average_sales(rule.item, rule.warehouse)

adjusted_sales = avg_sales * (rule.promotion_factor or 1)

required_qty = adjusted_sales * rule.lead_time_days

current_stock = get_stock(rule.item, rule.warehouse)

incoming = get_incoming(rule.item, rule.warehouse)

deficit = required_qty - current_stock - incoming

if deficit > 0:

rounded_qty = round_to_pack(deficit, rule.pack_size, rule.moq)

create_suggestion(rule, rounded_qty)

Helper functions (stock queries, SQL joins, report generation, rounding, document creation, idempotency, logging) typically add another ~180–300 LOC.

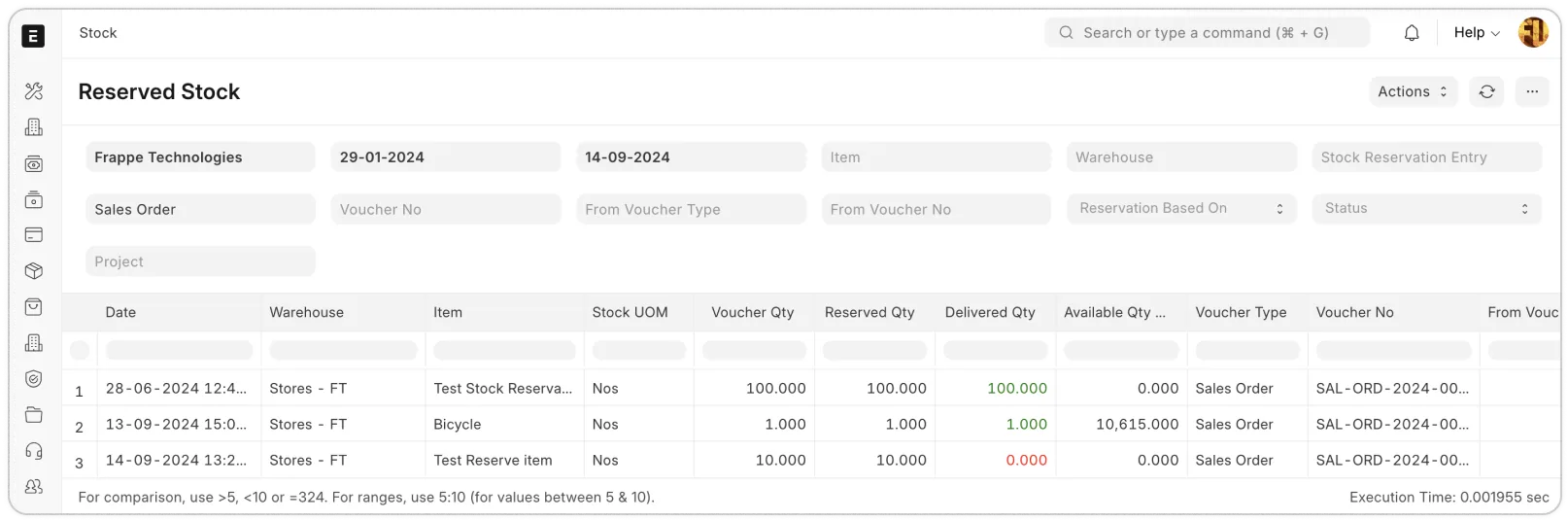

3.4 Suggestion Report

SELECT

item,

warehouse,

suggested_qty,

explanation

FROM `tabReplenishment Suggestion`

WHERE status = 'Draft';

Typical footprint: ~70–120 LOC.

3.5 Client-Side Approve Action

frappe.ui.form.on("Replenishment Suggestion", {

approve(frm) {

frappe.call({

method: "app.replenishment.approve",

args: { name: frm.doc.name },

callback() {

frm.reload_doc();

}

});

}

});

Typical footprint: ~40–80 LOC.

3.6 ERPNext LOC Summary

| Component | LOC (approx.) |

|---|---|

| Python core logic | 250–400 |

| Reports | 70–120 |

| Client JS | 40–80 |

| Metadata JSON | 120–200 |

| Total (repo LOC) | 480–800 |

Logic-only LOC: ~320–600

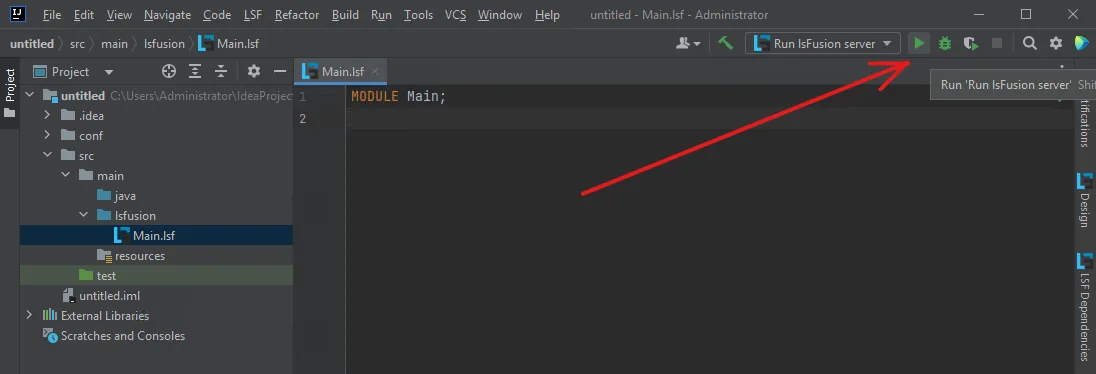

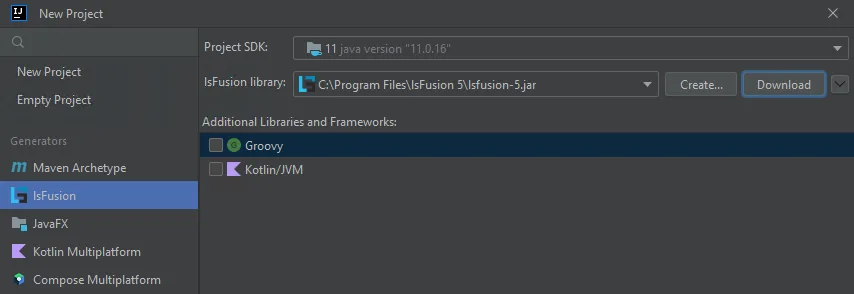

4. MyCompany Implementation

MyCompany is built on lsFusion, a declarative business logic platform.

Instead of writing procedural jobs, you define:

- Data properties

- Calculated expressions

- Actions

- Forms

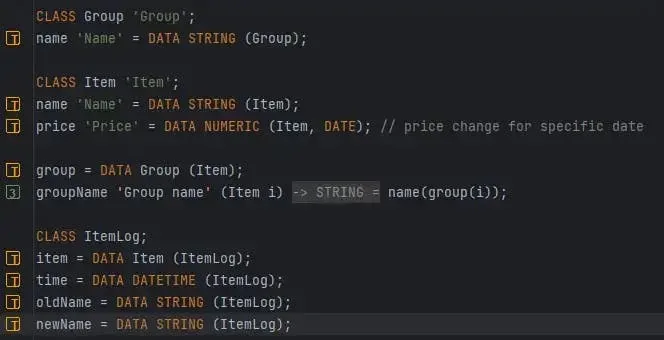

4.1 Data Definition

CLASS Item;

CLASS Warehouse;

CLASS Rule;

item = DATA Item (Rule);

warehouse = DATA Warehouse (Rule);

leadTime = DATA INTEGER (Rule);

promotionFactor = DATA NUMERIC[10,2] (Rule);

moq = DATA INTEGER (Rule);

packSize = DATA INTEGER (Rule);

4.2 Calculated Properties

avgSales(Item i, Warehouse w) =

SUM quantity(Sale s)

WHERE s.item = i

AND s.warehouse = w

AND s.date >= currentDate() - 28

/ 28;

requiredQty(Rule r) =

avgSales(item(r), warehouse(r))

* promotionFactor(r)

* leadTime(r);

deficit(Rule r) =

requiredQty(r)

- currentStock(item(r), warehouse(r))

- incoming(item(r), warehouse(r));

4.3 Rounding Logic

roundedQty(Rule r) =

MAX(

moq(r),

CEIL(deficit(r) / packSize(r)) * packSize(r)

)

IF deficit(r) > 0;

4.4 Action

generateSuggestions() {

FOR r IN Rule DO {

IF deficit(r) > 0 THEN

NEW Suggestion {

rule = r;

quantity = roundedQty(r);

explanation =

"ADS × LT – stock – incoming = " + deficit(r);

};

}

}

4.5 UI

FORM suggestions

OBJECTS s = Suggestion

PROPERTIES s.rule, s.quantity, s.explanation;

4.6 MyCompany LOC Summary

| Component | LOC (approx.) |

|---|---|

| Data definitions | ~25 |

| Business logic (properties) | ~60–90 |

| Rounding | ~10 |

| Actions | ~30–40 |

| UI | ~20–30 |

| Total | ~145–195 |

5. Side-by-Side Comparison

| Metric | ERPNext | MyCompany |

|---|---|---|

| Logic paradigm | Procedural (Python + glue) | Declarative (properties + actions) |

| Job scheduling | Explicit scheduled job + wiring | Action-oriented model (often less glue) |

| UI wiring | Frequently needs client-side scripting | Declarative forms |

| LOC (logic-only) | ~320–600 | ~100–150 |

| LOC (full repo) | ~480–800 | ~145–200 |

6. Architectural Implications

ERPNext extensions often spread logic across Python, JavaScript, and metadata. MyCompany tends to centralize business rules as a declarative model.

More lines of code usually mean:

- higher cognitive load

- more integration glue

- more surface area for regressions

This does not automatically make one platform “better”. It tells you what kind of change cost you are buying.

7. AI-assisted engineering economics

There is also a modern dimension that cannot be ignored: the amount and structure of code influences how efficiently AI tools can assist, refactor, and generate extensions.

- Context cost: more files and glue layers usually increase the context needed for correct AI assistance — and raise inference cost.

- Verification cost: fragmented procedural logic often requires more test scaffolding and runtime checks.

- Refactoring cost: more coupling points make safe automated refactors harder (for humans and AI).

- Knowledge capture cost: scattered logic increases documentation, prompting effort, and supervision time.

In other words, LOC is not only a proxy for human maintenance. It is increasingly a proxy for AI-assisted evolution cost.

8. When Each Platform Wins

Choose ERPNext if:

- You need a broad ERP quickly (accounting + procurement + stock) and you want a large ecosystem.

- Your replenishment rules are relatively standard and change slowly.

- You prefer Python and community familiarity over a modeling-first paradigm.

Choose MyCompany if:

- Your competitive advantage is in custom business rules and fast iterations.

- You expect frequent rule changes and want the system to stay explainable.

- You want ERP as a constructor: a model you evolve, not a product you patch.

9. Conclusion

Counting lines of code is not everything. But in complex business processes, LOC correlates with cognitive load — and cognitive load correlates with long-term maintenance cost.

In this replenishment scenario:

- ERPNext typically requires ~2–4× more custom code.

- MyCompany often expresses the same rules in a smaller, more centralized logic surface.

Appendix A: Git diff simulation (how you would measure this honestly)

If you want the “how many lines” comparison to be defensible, measure it using a real repository diff and a LOC tool (for example cloc)

with transparent inclusion rules. Below is a simulated but structurally realistic example.

ERPNext (Frappe app) — simulated diff

$ git diff --stat

app/replenishment/hooks.py | 34 +++++++

app/replenishment/scheduler.py | 140 +++++++++++++++++++++

app/replenishment/api.py | 88 +++++++++++++

app/replenishment/utils/sales.py | 74 ++++++++++++

app/replenishment/utils/stock.py | 92 +++++++++++++++

app/replenishment/utils/rounding.py | 38 ++++++++

app/replenishment/doctype/replenishment_rule/replenishment_rule.json | 165 +++++++++++++++++++++++++

app/replenishment/doctype/replenishment_suggestion/replenishment_suggestion.json | 142 +++++++++++++++++++++++

app/replenishment/report/replenishment_suggestions/replenishment_suggestions.py | 76 +++++++++++++

app/replenishment/report/replenishment_suggestions/replenishment_suggestions.js | 58 ++++++++++

app/replenishment/public/js/replenishment_suggestion_form.js | 62 +++++++++++

12 files changed, 971 insertions(+)

Typical interpretation:

- Logic-only LOC = scheduler + utils + API + report Python + minimal JS (~320–600)

- Total repo LOC includes metadata JSON (~480–900+ depending on UI/report fixtures)

MyCompany (lsFusion module) — simulated diff

$ git diff --stat

modules/replenishment/Replenishment.lsf | 182 +++++++++++++++++++++++++++

modules/replenishment/ReplenishmentForms.lsf | 54 ++++++++

modules/replenishment/ReplenishmentDocs.lsf | 31 ++++

3 files changed, 267 insertions(+)

The discipline that makes LOC comparisons meaningful: same specification, same measurement method, transparent inclusion rules.

Quick feedback

A quick signal helps us prioritize follow-ups.

Related posts

If you care about the practical consequences of ERP architecture and reducing dependency on contractors — here are more materials on DevLab Blog: